ECE5725 Final Project

Navigation Car

A Project by Xingze Li and Guanlin Zhu.

Demonstration Video

Introduction

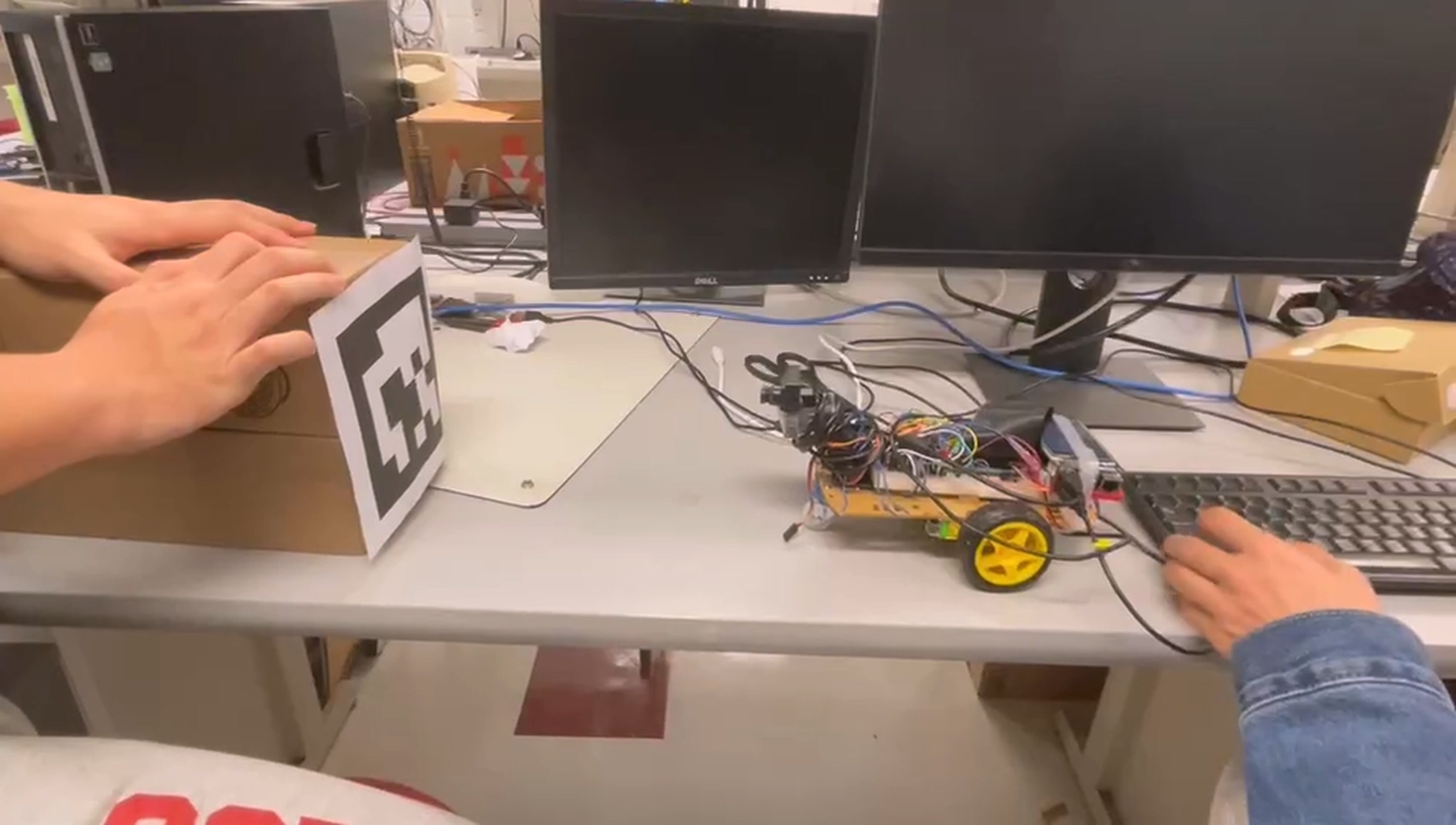

In this project, our group design a navigation car that can navigate people in a unfamiliar environment. For example, a freshman new to Phillips hall struggling to find a professor`s office can be led to destination with this car. We assemble motors, rasberry pi and an external camera to our robot car. With the help of OpenCV library and apriltag, the car make detection during running to search for the destination that indicated by the tag.

Project Objective:

- The final goal is that the car can run smoothly and navigate several paths that we set ahead.

- Make sure the navigation car can go straightforward in a long distance and detect the tag in a relative high wheeling speed and with high accuracy.

- Much better if the path can be chose through touching screen.

Design

In hardware aspect, we assemble a robot car and equip it with a camera. For boosting the detection, we add a gimble to enable the camera to rotate if it is needed. Both servo motor and gimble are controlled by PWM module. In software aspect, taking the advantage of OpenCV library, the rasberry pi can receive the video stream the camera captures. The method we use to formulate different specific paths is using apriltag. By labelling different tags along the entire path map, different sequential series of tags can be stored as paths lists. The details of tag implementation and function is during the time the camera sends video stream to rasberry pi, we call apriltag library to detect whether a tag appears in camera`s view. Since we use tag family 36h11, it detects which tag belonging to this family is detected. By decoding the return message and using math calculation, we acquire the distance between camera and tag. Then we can decide the next move like turn left or stop under a distance threshold. There is also a important point that keeping the car go straight, which is also by rectifying duty cycle of motor through math calculation on the basis of distance between tag and walls surrounding it. Once these separate parts are finished, we combine them together by using multi-processing in Python.

Testing

We design this project in a steppnig way, our universal design is make it just go straight line to detect a tag put in the end side and the the car stops. Once this version passes the test, we put more tags along the path and the task is still going along and stop but each tag must be detected though no extra responce is required. After this test, the car should make a turn after going straight for some time, passing some tags, then keep going straightforward. In the final version, the car should go through several paths, simple or complex. Apart from this, the car should also be initialized by interaction between user and screen of rasberry pi with the help of PyGame. But we fail to make it work correctly under multi-processing condition.

Result

We basically finish the implementation of the navigation robot which could function well with out test. For the test, we set several AprilTags in a rectangular area, the robot could dectect AprilTags successfully. Once the distance between our navigation robot and an AprilTag is shorter than the threshold which is set by us in our algorithm, the robot will switch to the mode correponding to the AprilTag(i.e., for an AprilTag on the corner, the robot could detect it and turn left or right as what we have designed in algorithm when the distance between the camera and this AprilTag is less than 0.5 meter). What’s more, the robot could keep itself driving at the correct direction by adjust the PWM of both motors when the robot could figure out the AprilTag deviates too much from the middle of the camera frame and the robot could perform well and stable if we set the AprilTag intensely on its way. Generally, most of the parts perform as planned but we also has met some problems during the progress. The camera which is adopted by us is too heavy for the gimble to carry it smoothly, so we discard the way of controlling the camera with bimbal functionand just fixed it to the board with some parts of gimbal. We meet 80%-90% of the goals outlined in the description.

Future Work

There are a lot of interesting and unexpected problems during the process of this project. The cmera which is adopted by us is too heave for the gimbal to carry, I would prefer switch to using a Raspberry Pi camera which could be hold by a gimbal and the robot is therefore able to adjust the camera to detect a tag from different angles. The motors’ performance will decrease as the power of batteries going down and we have to replace the old batteries with new ones frequently. I want to adopt rechargeable battery for the robot with a charging port on the board which could be recharge easily and it is also environment-friendly. In the last days of this project, we gradually found that the robot may perform better if it could stop for some time to adjust the camera and PWM of motors automatically in case the robot may miss some important tags if the camera is not able to detect them timely. What’s more, the robot may look more fantastic if we equip it with the part which could enable it to talk with people and this may make people who are served by this robot feel much better.

Work Distribution

Project group picture

Xingze Li

xl834@cornell.edu

Designed the mostly software architecture, testing.

Guanlin Zhu

gz243@cornell.edu

Designed the all hardware architecture, testing.

Parts List

- Raspberry Pi $35.00

- Raspberry Pi Camera V2 $25.00

- LEDs, Resistors and Wires - Provided in lab

Total: $60.00

References

PiCamera DocumentTower Pro Servo Datasheet

Bootstrap

Pigpio Library

R-Pi GPIO Document

Multi-processing

Apriltag

Code Appendix

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251

252

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

269

270

271

272

273

274

275

276

277

278

279

280

281

282

283

284

285

286

287

288

289

290

291

292

293

294

295

296

297

298

299

300

301

302

303

304

305

306

307

308

309

310

311

312

313

314

315

316

317

318

319

320

321

322

323

324

325

326

327

328

329

330

331

332

333

334

335

336

337

338

339

340

341

342

343

344

345

346

347

348

349

350

351

352

353

354

355

356

357

358

359

360

361

362

363

364

365

366

367

368

369

370

371

372

373

374

375

376

377

378

379

380

381

382

383

384

385

386

387

388

389

390

391

392

393

394

395

396

397

398

399

400

401

402

403

404

405

406

407

408

409

410

411

412

413

414

415

416

417

418

419

420

421

422

423

424

425

426

427

428

429

430

431

432

433

434

435

436

437

438

439

440

441

442

443

444

445

446

447

448

449

450

451

452

453

454

455

456

457

458

459

460

461

462

463

464

465

466

467

468

469

470

471

472

473

474

475

476

477

478

479

480

481

482

483

484

485

486

487

488

489

490

491

492

493

494

495

496

497

498

499

500

501

502

503

504

505

506

507

508

509

510

511

512

513

514

515

516

517

518

519

520

521

522

523

524

525

526

527

528

529

530

531

532

533

534

535

536

537

538

539

540

541

542

543

544

545

546

547

548

549

550

import cv2

import math

import os

import time

from multiprocessing import Process, Queue, Value, Lock, Array

import subprocess

import RPi.GPIO as GPIO

import pygame

from pygame.locals import *

import apriltag

import argparse

# ------------------------

# --------GPIO set--------

# ------------------------

def GPIO_init():

GPIO.setmode(GPIO.BCM)

GPIO.setup(17, GPIO.IN, pull_up_down=GPIO.PUD_UP)

GPIO.setup(22, GPIO.IN, pull_up_down=GPIO.PUD_UP)

GPIO.setup(23, GPIO.IN, pull_up_down=GPIO.PUD_UP)

GPIO.setup(27, GPIO.IN, pull_up_down=GPIO.PUD_UP)

# left side control

GPIO.setwarnings(False)

GPIO.setup(26, GPIO.OUT)

GPIO.setup(5, GPIO.OUT)

GPIO.setup(6, GPIO.OUT)

# right side control

GPIO.setup(16, GPIO.OUT)

GPIO.setup(20, GPIO.OUT)

GPIO.setup(21, GPIO.OUT)

# set initial state

GPIO.output(5, GPIO.LOW)

GPIO.output(6, GPIO.LOW)

GPIO.output(20, GPIO.LOW)

GPIO.output(21, GPIO.LOW)

# setup callback routine

def GPIO17_callback(channel):

pwm_l.ChangeDutyCycle(100)

GPIO.output(5, GPIO.HIGH)

GPIO.output(6, GPIO.LOW)

pwm_r.ChangeDutyCycle(100)

GPIO.output(20, GPIO.HIGH)

GPIO.output(21, GPIO.LOW)

def GPIO22_callback(channel):

GPIO.output(5, GPIO.LOW)

GPIO.output(6, GPIO.LOW)

GPIO.output(20, GPIO.LOW)

GPIO.output(21, GPIO.LOW)

def GPIO23_callback(channel):

pwm_l.ChangeDutyCycle(100)

GPIO.output(5, GPIO.HIGH)

GPIO.output(6, GPIO.LOW)

pwm_r.ChangeDutyCycle(100)

GPIO.output(20, GPIO.HIGH)

GPIO.output(21, GPIO.LOW)

def GPIO27_callback(channel):

global run_flag

global arrive_flag

arrive_flag.value = 1

run_flag.value = 0

pwm_l.stop()

pwm_r.stop()

GPIO.cleanup()

exit()

l_cyc = 100

r_cyc = 89

def move_forward():

global l_cyc

global r_cyc

pwm_l.ChangeDutyCycle(l_cyc)

GPIO.output(5, GPIO.HIGH)

GPIO.output(6, GPIO.LOW)

pwm_r.ChangeDutyCycle(r_cyc)

GPIO.output(20, GPIO.HIGH)

GPIO.output(21, GPIO.LOW)

#time.sleep(t)

def motor_stop():

GPIO.output(5, GPIO.LOW)

GPIO.output(6, GPIO.LOW)

GPIO.output(20, GPIO.LOW)

GPIO.output(21, GPIO.LOW)

def move_backward():

pwm_l.ChangeDutyCycle(100)

GPIO.output(5, GPIO.LOW)

GPIO.output(6, GPIO.HIGH)

pwm_r.ChangeDutyCycle(100)

GPIO.output(20, GPIO.LOW)

GPIO.output(21, GPIO.HIGH)

def pivot_left():

GPIO.output(5, GPIO.LOW)

GPIO.output(6, GPIO.LOW)

pwm_r.ChangeDutyCycle(80)

GPIO.output(20, GPIO.HIGH)

GPIO.output(21, GPIO.LOW)

def pivot_right():

pwm_l.ChangeDutyCycle(100)

GPIO.output(5, GPIO.HIGH)

GPIO.output(6, GPIO.LOW)

GPIO.output(20, GPIO.LOW)

GPIO.output(21, GPIO.LOW)

def GPIO_event_init():

GPIO.add_event_detect(17,GPIO.FALLING,callback=GPIO17_callback,bouncetime=300)

GPIO.add_event_detect(22,GPIO.FALLING,callback=GPIO22_callback,bouncetime=300)

GPIO.add_event_detect(27,GPIO.FALLING,callback=GPIO27_callback,bouncetime=300)

P238_tag_list = [0]

P0_tag_list = [10]

P1_tag_list = [11]

P2_tag_list = [12]

def motor_rolling(detect2motor_queue, pwm_l, pwm_r):

#print("motor_rolling")

global l_cyc

global r_cyc

global run_flag

global activate

global arrive_flag

pwm_l.start(0)

pwm_r.start(0)

time0 = time.time()

destination = 3

route_tag_list = []

while(run_flag.value == 1 and arrive_flag.value == 0):

if(activate.value):

#destination = screen2motor_queue.get()

motor_queue_list = detect2motor_queue.get()

tag_id_list = motor_queue_list[0]

tag_id = -1

if(len(tag_id_list) == 0):

tag_id = -1

else:

tag_id = tag_id_list[0]

if tag_id not in route_tag_list:

route_tag_list.append(tag_id)

distance = motor_queue_list[1]

err = motor_queue_list[2]

print(err)

print(route_tag_list)

if(err>0.3):

l_cyc = 82

r_cyc = 95

elif(err < -0.3):

l_cyc = 95

r_cyc = 82

else:

l_cyc = 95

r_cyc = 89

#print('------------------')

#print("tag_id:{0}, distance:{1} in motor".format(tag_id, distance))

#print("route_tag_list:{0}".format(route_tag_list))

# the entire rolling logic

if(destination == -1):

#print("wait to start")

motor_stop()

elif(destination == 3 and route_tag_list == P0_tag_list and 0 < distance < 0.8):

#print("Arrive P238")

pivot_right()

time.sleep(0.6)

move_forward()

time.sleep(1)

arrive_flag.value = 1

elif(destination == 3 and route_tag_list == P1_tag_list and 0 < distance < 0.8):

#print("Arrive P")

pivot_right()

time.sleep(0.6)

move_forward()

time.sleep(1.5)

arrive_flag.value = 1

elif(destination == 3 and route_tag_list == P2_tag_list and 0 < distance < 0.5):

#print("Arrive P")

pivot_right()

time.sleep(0.8)

move_forward()

time.sleep(1.5)

arrive_flag.value = 1

elif(destination == 3 and route_tag_list == P238_tag_list and 0 < distance < 0.8):

#print("Arrive anthoy")

pivot_left()

time.sleep(0.55)

move_forward()

time.sleep(1.5)

#arrive_flag.value = 1

else:

#print("Keep Running")

move_forward()

route_tag_list = []

# after press 'q' to terminate video stream capture

if(motor_queue_list == "terminate"):

run_flag.value = 0

#except KeyboardInterrupt:

#print("except")

#run_flag.value = 0

#pwm_l.stop()

#pwm_r.stop()

#GPIO.cleanup()

time1 = time.time()

if(time1 - time0 > 180):

break

run_flag.value = 0

arrive_flag.value = 1

pwm_l.stop()

pwm_r.stop()

GPIO.cleanup()

quit()

#----------------------------

# Pygame initialization

#----------------------------

WHITE = 255, 255, 255

BLACK = 0, 0, 0

'''

os.putenv('SDL_VIDEODRIVER', 'fbcon') #display on piTFT

os.putenv('SDL_FBDEV', '/dev/fb0')

os.putenv('SDL_MOUSEDRV', 'TSLIB') #track mouse clicks on piTFT

os.putenv('SDL_MOUSEDEV', '/dev/input/touchscreen')

pygame.init()

pygame.mouse.set_visible(False)

# set screen

screen = pygame.display.set_mode((320, 240))

screen.fill(BLACK)

id_title = 'not detected'

id_pos = (160, 120)

my_font_0 = pygame.font.Font(None, 40)

my_button_0 = 'Start'

my_button_0_pos = (160, 120)

my_font_1 = pygame.font.Font(None, 40)

dest_button = {'P238':(40, 40), 'P':(40, 120)}

P238_title = {'Destination':(120, 20), 'P238':(200, 20)}

P238_state = ['Tag1', 'No detection']

P238_state_pos = [(40, 80), (100, 80)]

P_title = {'Destination':(120, 20), 'P2':(200, 20)}

P_state = ['Tag1', 'No detection', 'Tag2', 'No detection']

P_state_pos = []

# initialize button

def draw_start_button():

start_surface = my_font_0.render(my_button_0, True, WHITE)

start_rect = start_surface.get_rect(center=(my_button_0_pos))

screen.blit(start_surface, start_rect)

def draw_dest_button():

for dest, dest_pos in dest_button.items():

dest_surface = my_font_1.render(dest, True, WHITE)

dest_rect = dest_surface.get_rect(center=(dest_pos))

screen.blit(dest_surface, dest_rect)

# initialize title

def draw_title(destination):

if destination == 1:

room_title = P238_title

elif destination == 2:

room_title = P_title

for title, title_pos in room_title.items():

title_surface = my_font_1.render(title, True, WHITE)

title_rect = title_surface.get_rect(center=title_pos)

screen.blit(title_surface, title_rect)

# initialize state label

def draw_state_label(destination):

if destination == 1:

room_state = P238_state

room_state_pos = P238_state_pos

elif destination == 2:

room_state = P_state

room_state_pos = P_state_pos

for idx in range(len(room_state)):

state_surface = my_font_1.render(room_state[idx], True, WHITE)

state_rect = state_surface.get_rect(center=room_state_pos[idx])

screen.blit(state_surface, state_rect)

def show_screen(detect2motor_queue):

global run_flag

global arrive_flag

route_tag_id = []

tag_str = ""

while(arrive_flag.value == 0 and run_flag.value == 1):

screen.fill(BLACK)

motor_queue_list = detect2motor_queue.get()

#print(motor_queue_list)

if(len(motor_queue_list[0])):

tag_id_list = motor_queue_list[0]

tag_id = tag_id_list[0]

if tag_id not in route_tag_id:

route_tag_id.append(tag_id)

id_title = str(tag_id)

tag_str += id_title + ", "

id_surface = my_font_1.render(tag_str, True, WHITE)

id_rect = id_surface.get_rect(center=(id_pos))

screen.blit(id_surface, id_rect)

pygame.display.flip()

time.sleep(0.5)

exit()

'''

'''

# draw start button

draw_start_button()

pygame.display.flip()

start = 0

choose_destination = 1

destination = -1

while(arrive_flag.value == 0):

screen.fill(BLACK)

if start == 0:

draw_start_button()

#else:

# draw_dest_button()

# ----detect screen touch event----

for event in pygame.event.get():

if(event.type is MOUSEBUTTONDOWN):

pos = pygame.mouse.get_pos()

elif(event.type is MOUSEBUTTONUP):

pos = pygame.mouse.get_pos()

x, y = pos

if start == 0 and 140 < x < 180 and 100 < y < 140:

start = 1

activate.value = 1

elif start == 1 and choose_destination == 1:

# select the destination

draw_dest_button()

if 0 < x < 80 and 0 < y < 80:

destination = 1

choose_destination = 0

elif 0 < x < 80 and 80 < y < 160:

destination = 2

choose_destination = 0

screen2motor_queue.put(destination)

#choose_destination = 0

if start == 1 and choose_destination == 0:

draw_title(destination)

draw_state_label(destination)

pygame.display.flip()

'''

#----------------------------

#---------tag Detect---------

#----------------------------

def tagDetect(image):

#path = "/home/pi/lab_final/images/tag36h11_0.png"

# construct the argument parser adn parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-i", "--image", required=True, help="no")

args = vars(ap.parse_args())

# load the input image and convert it to grayscale

# print("Loading images")

# image = cv2.imread(path)

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

# define the AprilTags detector and the detect the AprilTags

# in the input image

#print("Detecting tags")

options = apriltag.DetectorOptions(families="tag36h11")

detector = apriltag.Detector(options)

results = detector.detect(gray)

#print("{0} total AprilTags Detected".format(len(results)))

avg_tagWidth = 0

tag_ids = []

err = 0.0

# loop over the AprilTag detection results

for count, r in enumerate(results):

tag_ids.append(r.tag_id)

# extract the bounding box (x, y), the cooordinates and convert to pairs

(ptA, ptB, ptC, ptD) = r.corners

ptA = (int(ptA[0]), int(ptA[1]))

ptB = (int(ptB[0]), int(ptB[1]))

ptC = (int(ptC[0]), int(ptC[1]))

ptD = (int(ptD[0]), int(ptD[1]))

avg_tagWidth += abs(int(ptA[0]) - int(ptB[0]))

avg_tagWidth /= (count + 1)

d_left = int((ptA[0] + ptD[0])/2)

d_right = 1280 - int((ptB[0] + ptD[0])/2)

err = 2*(d_right - d_left)/(d_right + d_left)

# draw the bounding box of the AprilTag detection

cv2.line(image, ptA, ptB, (0, 255, 0), 2)

cv2.line(image, ptB, ptC, (0, 255, 0), 2)

cv2.line(image, ptC, ptD, (0, 255, 0), 2)

cv2.line(image, ptD, ptA, (0, 255, 0), 2)

# draw the center (x, y) -- coordinates of the AprilTag

(cX, cY) = (int(r.center[0]), int(r.center[1]))

cv2.circle(image, (cX, cY), 5, (0, 0, 255), -1)

# draw the tag family on the image

tagFamily = r.tag_family.decode("utf-8")

cv2.putText(image, tagFamily, (ptA[0], ptA[1] - 15),

cv2.FONT_HERSHEY_SIMPLEX, 0.5, (0, 255, 0), 2)

#print("tag family : {0}".format(tagFamily))

return image, avg_tagWidth, tag_ids, err

#----------------------------

#--------get distance--------

#----------------------------

def get_focalLength(frame, avg_tagWidth):

return KNOW_DISTANCE * avg_tagWidth / KNOW_WIDTH

def getDistance(detect2motor_queue, threshold):

# capture the video stream

video_stream = cv2.VideoCapture(0)

# acquire the average focallength

KNOW_DISTANCE = 0.7

KNOW_WIDTH = 0.173

# pixels

FOCAL_LENGTH = 790

distance = -1

detect = 0

turn = 0

tag_ids = []

global run_flag

global arrive_flag

while (run_flag.value == 1 and arrive_flag.value == 0):

if(activate.value == 1):

try:

# acquire video stream

rect, frame = video_stream.read()

# generate detected image

image, avg_tagWidth, tag_ids, err = tagDetect(frame)

# calculate distance

if(math.isclose(avg_tagWidth, 0.0) == False):

distance = KNOW_WIDTH * FOCAL_LENGTH / avg_tagWidth

detect = 1

else:

distance = -1

detect = 0

# detect corner, when to turn

#if(distance < threshold):

# turn = 1

# send_motor_queue.put(turn)

motor_queue_list = []

motor_queue_list.append(tag_ids)

motor_queue_list.append(distance)

motor_queue_list.append(err)

#print("motor_queue_list:", motor_queue_list)

detect2motor_queue.put(motor_queue_list)

# show image

#cv2.namedWindow("Detection", cv2.WINDOW_NORMAL)

#cv2.resizeWindow("Detection", 320, 160)

#font = cv2.FONT_HERSHEY_TRIPLEX

#if(detect == 1):

# cv2.putText(image, 'D: {0}'.format(round(distance, 2)), (20, 100), font, 3, (0, 0, 255), 3)

#else:

# cv2.putText(image, 'NO TAG', (20, 100), font, 3, (0, 0, 255), 3)

#cv2.imshow("Detection", image)

if cv2.waitKey(10) & 0xFF == ord('q'):

break

except KeyboardInterrupt:

run_flag.value = 0

detect2motor_queue.put("terminate")

video_stream.release()

#cv2.destroyAllWindows()

#-----------------------

#-------main------------

#-----------------------

if __name__ == "__main__":

time.sleep(2)

# init GPIO

GPIO_init()

GPIO_event_init()

# create the PWM instance

pwm_l = GPIO.PWM(26, 50)

pwm_r = GPIO.PWM(16, 50)

# run_flag is used to safely exit all process

run_flag = Value('i', 1)

activate = Value('i', 1)

arrive_flag = Value('i', 0)

# thread queue

detect2motor_queue = Queue()

#screen2motor_queue = Queue()

p_start_lock = Lock()

p_end_lock = Lock()

# start process

threshold = 0.5

#p0 = Process(target=show_screen, args=(detect2motor_queue, ))

p1 = Process(target=motor_rolling, args=(detect2motor_queue, pwm_l, pwm_r))

p2 = Process(target=getDistance, args=(detect2motor_queue, threshold))

#p0.start()

p1.start()

p2.start()

# wait for processes exit safely

#p0.join()

p1.join()

p2.join()

pwm_l.stop()

pwm_r.stop()

GPIO.cleanup()

print("quit")

exit()